Back

The Role of Virtual Try-On in Modern Reverse Logistics

Laura B

Marketing Analyst

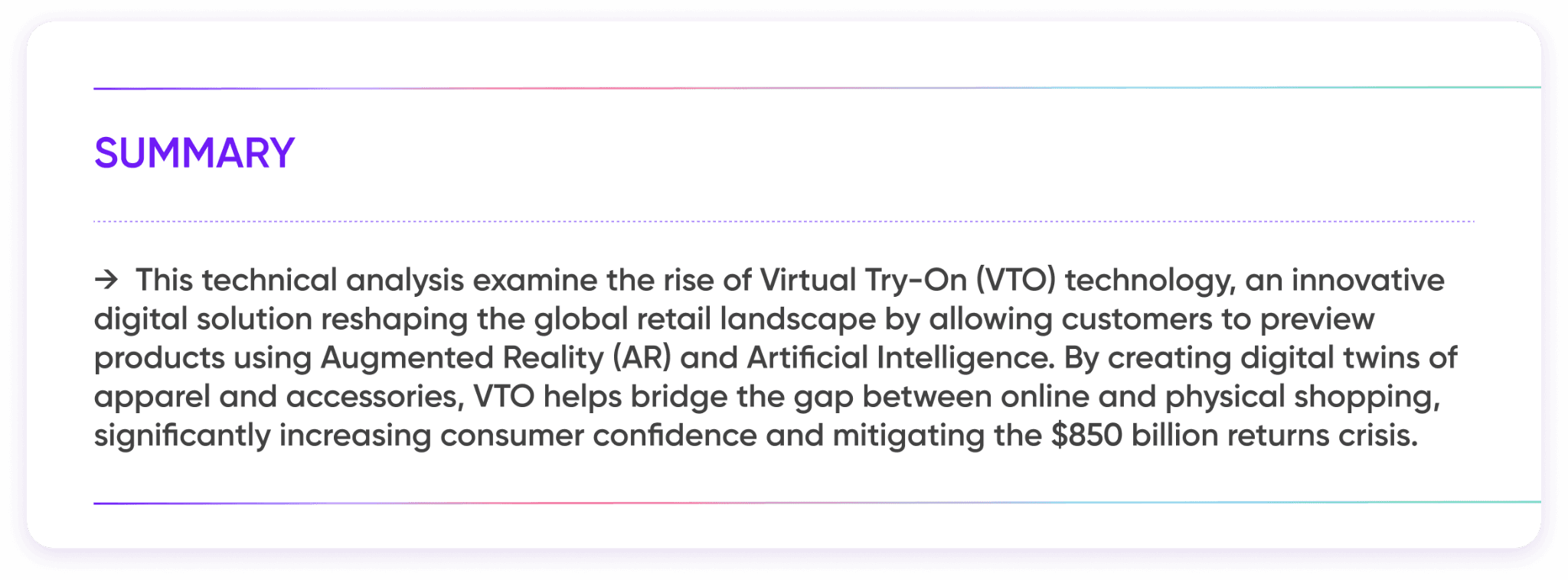

The modern e-commerce margin is not being destroyed by a lack of sales; it is evaporating due to the logistical friction inherent in consumer uncertainty.

According to the NRF 2025 Retail Returns Landscape Report, total retail returns have escalated to a staggering $849.9 billion, representing 15.8% of total sales (in the e-commerce sector, the figure is even more dire, with online return rates hitting 19.3). For today's retailers, "bracketing"—the consumer habit of purchasing multiple sizes with the intent of returning most—has shifted from a niche behaviour to an operational tax that erodes up to 20% of annual revenue. This is where Virtual Try-On (VTO) shifts the dressing room from a consumer's best guess to a brand's mathematical proof, finally aligning digital expectations with physical reality.

*Demographic Impact

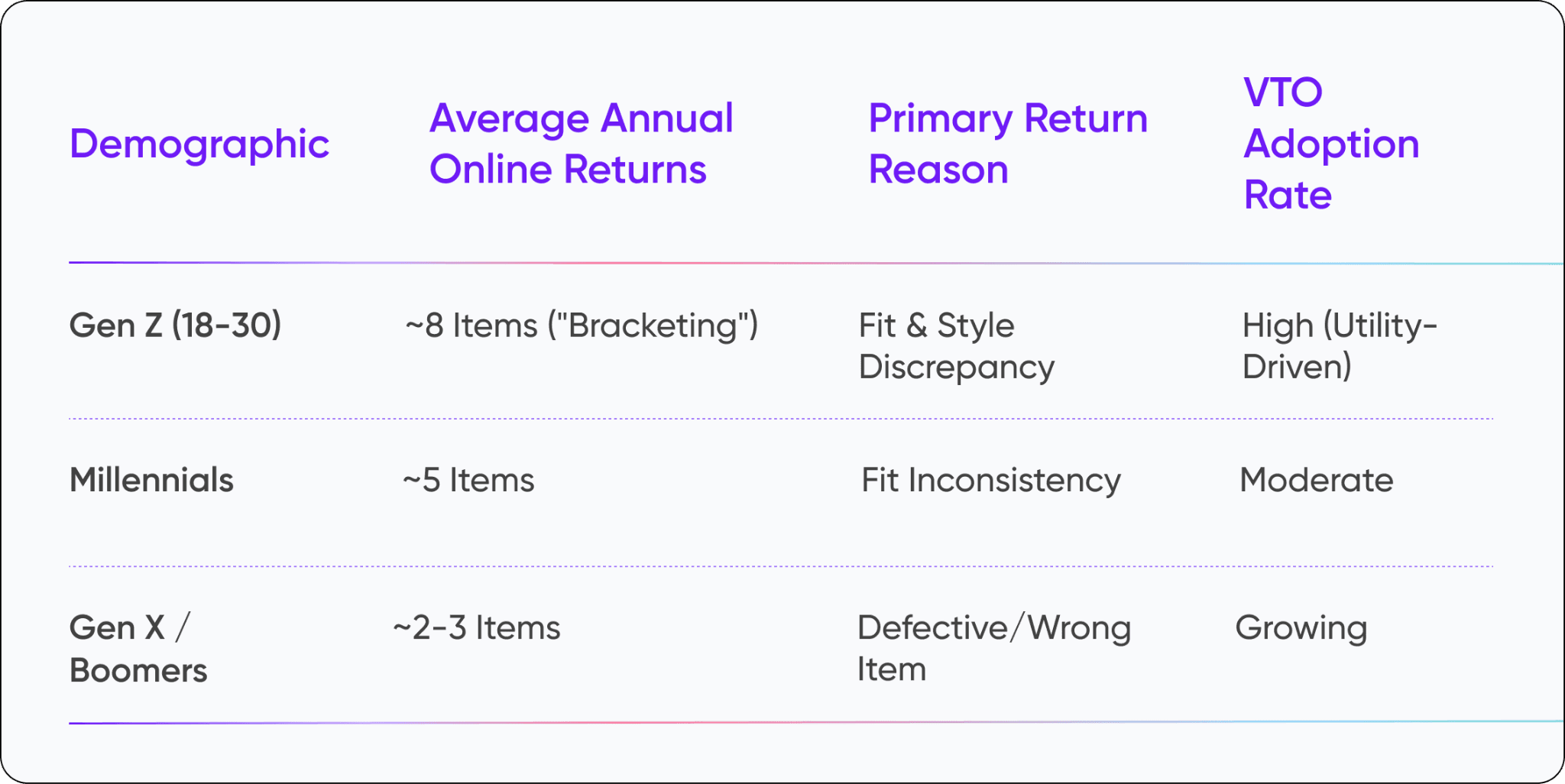

VTO utility varies across demographics, primarily addressing the "bracketing" habits of younger consumers:

→ What is Virtual Try-On (VTO) Technology?

Virtual Try-On (VTO) is a spatial computing application that integrates Augmented Reality (AR), computer vision. And while the industry attempted to solve fit issues in the early 2010s, those efforts were largely aesthetic failures. Today, Modern VTO leverages a sophisticated mix of generative AI and photogrammetry, with the technology evolving from a complex, gated novelty into an accessible utility. Retailers no longer need to develop proprietary front-end try-on engines from the ground up.

Key developments include the following:

Cloud-Native Accessibility: Retailers no longer need to build proprietary engines from scratch; hyperscalers like Google Cloud now provide these capabilities as a service.

Generative AI Models: Platforms like Google’s Vertex AI use Diffusion Models to create try-on images that maintain garment integrity while automatically adapting to diverse body types and poses. To build these applications, developers often rely on Hugging Face—the "GitHub for machine learning"—where they can easily access, share, and collaborate on the open-source models that power these virtual try-on features.

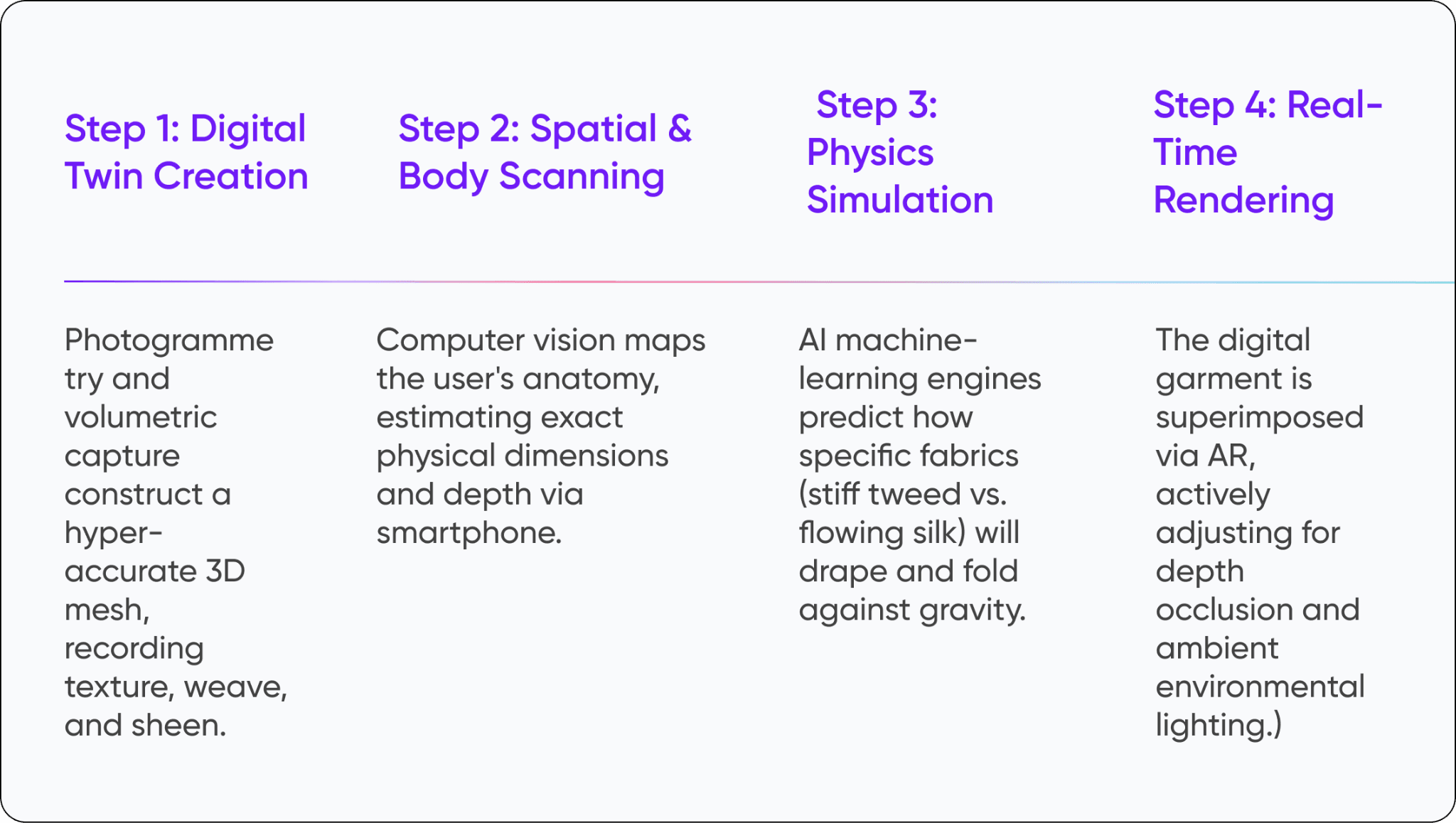

→ How Does Virtual Try-On Technology Work for Clothing?

The process of Virtual Try-On (VTO) for apparel involves specialized generative AI Models that map 2D or 3D garments onto a user’s live video feed or uploaded photo. To understand how virtual try-on technology works for clothing, one must look at the orchestration of three core components:

Human Pose Estimation: Using deep learning, the system identifies key landmarks on the user’s body (shoulders, waist, limbs) to create a skeletal map.

3D Asset Management and Warping: The digital garment is not just a flat image; it is an intelligent asset. Diffusion Models and GANs (Generative Adversarial Networks) calculate how the fabric should stretch, fold, or drape based on the user's specific posture.

Real-Time Render Engines: High-performance engines overlay the transformed garment onto the user, adjusting for lighting conditions and occlusions to maintain immersion.

Technical breakdown:

→ Optimizing Reverse Logistics and Conversion Rates

Reverse Logistics Optimization refers to the strategic reduction of costs and carbon emissions associated with returns by using Digital Fitting to ensure the initial purchase is correct. Processing a single return—including shipping, inspection, and liquidation—often costs a retailer more than the actual value of the refund.

Business Results of VTO Implementation

Conversion Spikes: While many retailers report a steady 10% increase, some sectors have seen jumps as high as 400%.

Reduced Return Rates: mass-market brands like ASOS recently reported a 160 basis point reduction in its returns rate following deep-tech AI investments

Hyper-Personalization: Fitting room sessions generate data on exact body dimensions and style affinities, allowing brands to curate future recommendations and adjust manufacturing specs to match actual consumer silhouettes.

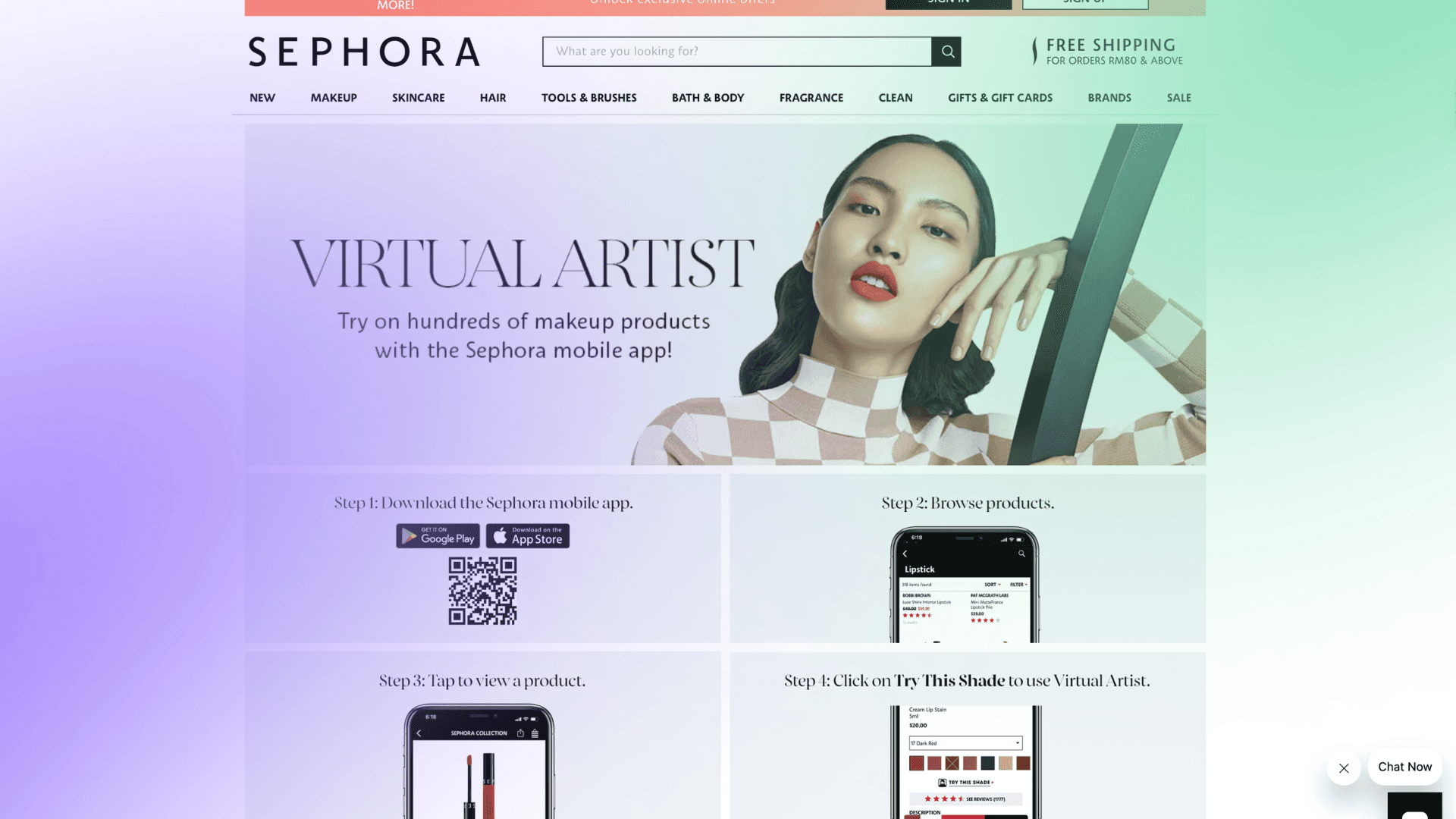

Sephora's Virtual Artist tool allows users to bypass physical testers by using augmented reality to "try on" specific makeup shades directly from their phones.The brand has perfected the digital replication of texture, color, and finish (matte vs. gloss) allowing risk-free experimentation with new shades, directly solving the fundamental skin-tone matching barrier in e-commerce.

→ Technical Infrastructure as a Data-Generation Tool

Beyond the consumer interface, VTO functions as a sophisticated sensor that generates metadata to inform and optimize the entire supply chain.

Body Dimension Mapping: By aggregating data on user sizes, brands can recalibrate manufacturing specifications to align with the actual silhouettes of their customer base.

Fabric Interaction Data: Tracking items that are "tried on" but rejected helps identify technical design flaws, such as perceived stiffness or poor draping.

Intent Signaling: Digital fitting sessions offer a more robust signal of purchase intent than standard "add to cart" actions, facilitating more accurate inventory forecasting.

→ Implementation Roadmap: Integrating VTO into Your Stack

Retailers no longer need to build proprietary engines. Hyperscalers like Google Cloud provide foundational AI models through Vertex AI. Success depends on a disciplined technical sequence:

1. Select Architecture

Prioritize WebAR platforms (e.g., Zakeke, Threekit) integrated directly into the browser. Avoid standalone app downloads.

2. Forge Digital Twins

Invest in high-fidelity, low-poly 3D models. Assets must be lightweight for mobile rendering but detailed enough for luxury quality.

3. Seamless Integration

Embed the AR trigger directly onto the existing e-commerce product page, positioned alongside the 'Add to Cart' button.

4. Quality Assurance

Rigorously test for depth occlusion errors, loading latency, and dynamic lighting glitches across varying mobile devices.

(Internal labels: 100, 200, 300, 50, 0, Depth sensor, -1.60m)

5. Social Amplification

Integrate sharing functionalities allowing users to seamlessly export try-on visuals to Instagram or TikTok, driving organic traffic.

→ Conclusion: What AI Can’t Fix (Yet)

While the "fun factor" of AR filters might initially attract users, for the consumer, the ability to solve a fit problem outweighs the novelty of the digital mirror. And while it currently lacks sensory inputs like "hand-feel" or weight, it remains the most powerful visualization tool available to bridge the gap where physical touch is absent. By blending profitability with sustainability, VTO is reshaping the e-commerce landscape into one defined by greater precision rather than guesswork.

Laura B

Marketing Analyst

Share